Test Your English Pronunciation with AI

The problem: why self-assessing pronunciation is so hard

If you study English on your own, one nagging question comes up early: is my "th" actually right? There's no way to know just by listening to your own recording — your brain already normalizes the sound you produced. A live human teacher would solve that, but it's expensive, schedule-dependent, and impossible to have on hand every time you want to practice.

AI pronunciation assessment solves this in three steps:

1. It shows you an English sentence and asks you to read it aloud

2. It listens to your audio and compares it, phoneme by phoneme, against a native speaker's reference pronunciation

3. It returns a score from 0 to 100 for each word, each syllable, and each individual phoneme

How it works under the hood: forced alignment + acoustic model

The core technique is called forced alignment. Picture two parallel tracks:

- Expected track: the phonetic transcription of the sentence, in standard American English phonemes (IPA: /aɪ ˈrɪli laɪk ˈtʃɒklət/)

- Real track: the audio you recorded, converted into a spectrogram

The system uses an acoustic model — a neural network trained on thousands of hours of speech — to map each segment of the spectrogram to the most likely phoneme. An alignment algorithm then matches your sequence against the expected one, pinpointing each phoneme in time. The "distance" between what you said and the ideal becomes your score.

What the score actually measures

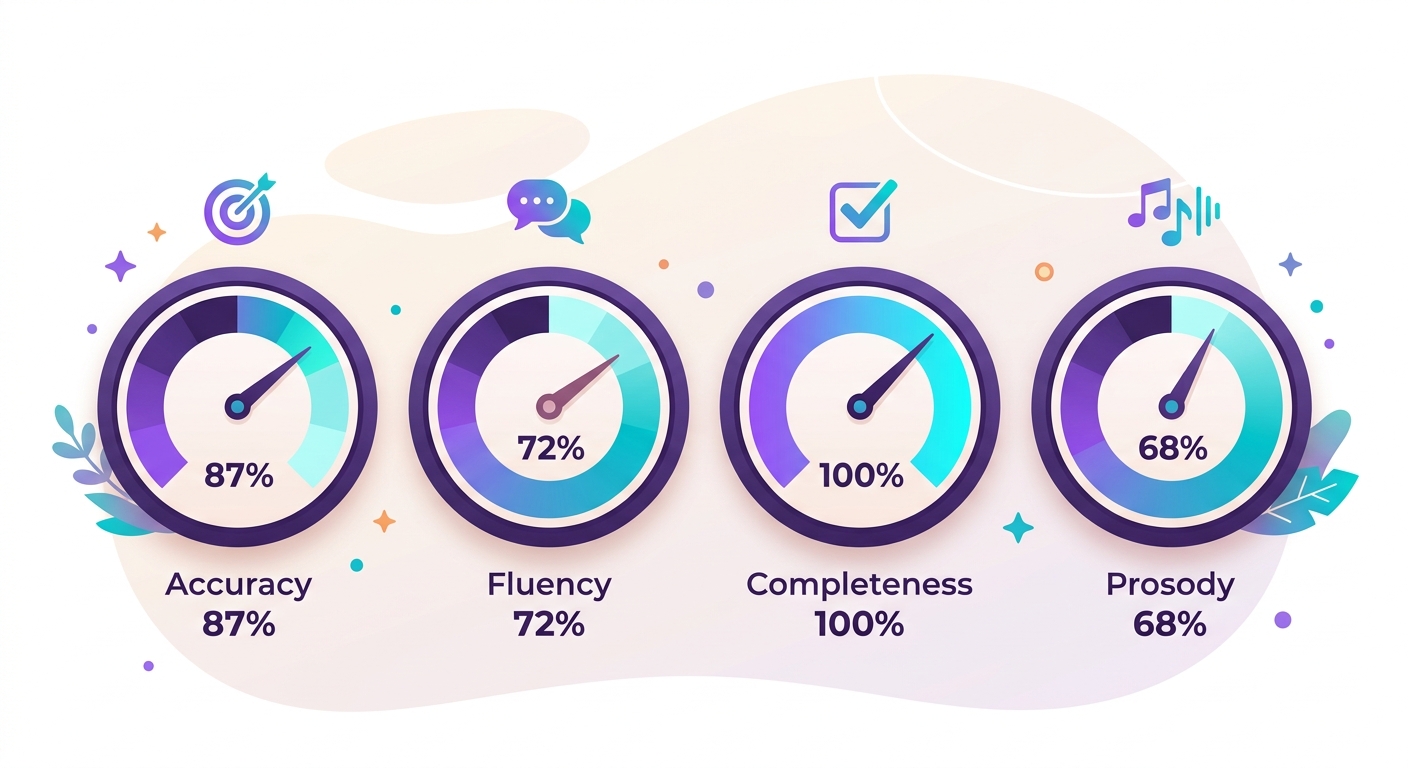

A solid pronunciation assessment API (like Brainiall's) returns four dimensions:

- Accuracy (0–100): how close to the native phoneme you produced each sound

- Fluency (0–100): rhythm, pauses, naturalness — a choppy read loses points even if every word in isolation is perfect

- Completeness (0–100): did you read the entire sentence, or did you skip words?

- Prosody (0–100): intonation, stress on the right syllables, the overall melody of the sentence

An overall score above 80 means "understandable to any native speaker." Above 90 means "sounds nearly native." Below 60 points to specific phonemes that need targeted practice.

Cases where AI still falls short of a teacher

None of the above replaces a lesson with a teacher 100%. Situations where a human is still necessary:

- Specific regional accents: the model compares against "General American," which may penalize you if your goal is British or Australian English

- Culturally accurate intonation: sarcasm, rhetorical emphasis, humor — these require nuanced feedback

- Motivation and strategy: a teacher tailors exercises to your personal goal (travel, job interview, exam)

- Free conversation: speaking alone doesn't train the listening-and-responding-in-real-time side of the skill

Why starting with Brainiall still makes sense

Even with the limitations above, a pronunciation assessment API covers 90% of what an in-person lesson would for:

- Daily practical preparation (10–15 minutes before work or after dinner)

- Laser focus on the specific phonemes you know you struggle with (/θ/ vs /s/, long vs short vowels)

- Zero cost on Brainiall's free starter plans

- Available 24/7 with no scheduling needed

Try it right now

Open the Brainiall chat, ask "give me an English sentence to practice my pronunciation," record your reading using the microphone button, and send it. You'll get all 4 scores plus word-by-word feedback in seconds. Free for up to 3 attempts/month; the Pro plan at $29 unlocks daily use.